Wednesday, January 30, 2008

Clariion Disk Format

The Clariion Formats the disks in Blocks. Each Block written out to the disk is 520 bytes in size. Of the 520 bytes, 512 bytes is used to store the actual DATA written to the block. The remaining 8 bytes per block is used by the Clariion to store System Information, such as a Timestamp, Parity Information, Checksum Data.

Element Size – The Element Size of a disk is determined when a LUN is bound to the RAID Group. In previous versions of Navisphere, a user could configure the Element Size from 4 blocks per disk 256 blocks per disk. Now, the default Element Size in Navisphere is 128. This means that the Clariion will write 128 blocks of data to one physical disk in the RAID Group before moving to the next disk in the RAID Group and write another 128 blocks to that disk, so on and so on.

Chunk Size – The Chunk Size is the amount of Data the Clariion writes to a physical disk at a time. The Chunk Size is calculated by multiplying the Element Size by the amount of Data per block written by the Clariion.

128 blocks x 512 bytes of Data per block = 65,536 bytes of Data per Disk. That is equal to 64 KB. So, the Chunk Size, the amount of Data the Clariion writes to a single disk, before writing to the next disk in the RAID Group is 64 KB.

LUNs

As stated in the Host Configuration Slide, a LUN is the disk space that is created on the Clariion. The LUN is the space that is presented to the host. The host will see the LUN as a “Local Disk.”

In Windows, the Clariion LUN will show up in Disk Manager is Drive #, which the Windows Administrator can now format, partition, assign a Drive Letter, etc…

In UNIX, the Clariion LUN will show up as a c_t_d_ address, which the UNIX Administrator can now mount.

A LUN is owned by a single Storage Processor at a time. When creating a LUN, you assign the LUN to a Storage Processor, SPA, SPB or let the Clariion choose by selecting AUTO. The Auto option lets the Clariion assign the next LUN to the Storage Processor with the fewest number of LUNs.

The Properties/Settings of LUN during creation/binding are:

1. Selecting which RAID Group the LUN will be bound to.

2. If it is the first LUN created on a RAID Group, the first LUN will set the RAID Type for the entire RAID Group. Therefore, when creating/binding the first LUN on a RAID Group, you can select the RAID Type.

3. Select a LUN Id or number for the LUN.

4. Specify a REBUILD PRIORITY for the LUN in the event of a Hot Spare replacing a failed disk.

5. Specify a VERIFY PRIORITY for the LUN to determine the speed in which the Clariion runs a “SNIFFER” in the background to scrub the disks.

6. Enable or Disable Read and Write Cache at the LUN level. An example might be to disable Read/Write Cache for a LUN that is given to a Development Server. This ensures that the Development LUNs will not use the Cache that is needed for Production Data.

7. Enable Auto Assign. By default this box is unchecked in Navisphere. That is because you will have some sort of Host Based Software that will manage the trespassing and failing back of a LUN.

8. Number of LUNs to Bind. You can bind up to 128 LUNs on a single RAID Group.

9. SP Ownership. You can select if you want your LUN(s) to belong to SP A, SP B, or the AUTO option in which the Clariion decides LUN ownership based on the Storage Processor with the fewest number of LUNs.

10. LUN Size. You specify the size of a LUN by entering the numbers, and selecting MB (MegaBytes), GB (GigaBytes), TB (TeraBytes), or Block Count to specify the number of blocks a LUN will be. This is critical for SnapView Clones, and MirrorView Secondary LUNs.

The amount of LUNs a Clariion can support is going to be Clariion specific.

CX 300 – 512 LUNs

CX3-20 – 1024 LUNs

CX3-80 – 2048 LUNs

Tuesday, January 29, 2008

RAID 1_0

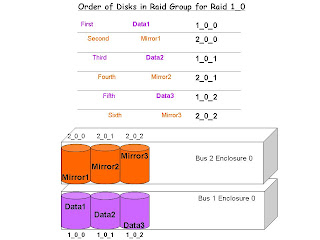

Order of Disks in RAID Group for RAID 1_0.

When creating a RAID 1_0 Raid Group, it is important to know and understand the order of the drives as they are put into the RAID Group will absolutely make a difference in the Performance and Protection of that RAID Group. If left to the Clariion, it will simply choose the next disks in the order in which is sees the disks to create the RAID Group. However, this may not be the best way to configure a RAID 1_0 RAID Group. Navisphere will take the next disks available, which are usually right next to one another in the same enclosure.

In a RAID 1_0 Group, we want the RAID Group to span multiple enclosures as illustrated above. The reason for this is as we can see, the Data Disks will be on Bus 1_Enclosure 0, and the Mirrored Data Disks will be on Bus 2_Enclosure 0. The advantage of creating the RAID Group this way is that we place the Data and Mirrors on two separate enclosures. In the event of an enclosure failure, the other enclosure could still be alive and maintaining access to the data or the mirrored data. The second advantage is Performance. Performance could be gained through this configuration because you are spreading the workload of the application across two different buses on the back of the Clariion.

Notice the order in which the disks were placed into the RAID 1_0 Group. In order to for the disks to be entered into the RAID Group in this order, they must be manually entered into the RAID Group this way via Navisphere or the Command Line.

The first disk into the RAID Group receives Data Block 1.

The second disk into the RAID Group receives the Mirror of Data Block 1.

The third disk into the RAID Group receives Data Block 2.

The fourth disk into the RAID Group receives the Mirror of Data Block 2.

The fifth disk into the RAID Group received Data Block 3.

The sixth disk into the RAID Group receives the Mirror of Data Block 3.

If we let the Clariion choose these disks in its particular order, it would select them:

First disk – 1_0_0 (Data Block 1)

Second disk – 1_0_1 (Mirror of Data Block 1)

Third disk – 1_0_2 (Data Block 2)

Fourth disk – 2_0_0 (Mirror of Data Block 2)

Fifth disk – 2_0_1 (Data Block 3)

Sixth disk – 2_0_2 (Mirror of Data Block 3)

This defeats the purpose of having the Mirrored Data on a different enclosure than the Data Disks.

RAID Groups and Types

RAID GROUPS and RAID Types

The above slide illustrates the concept of creating a RAID Group and the supported RAID types of the Clariions.

RAID Groups

The concept of a RAID Group on a Clariion is to group together a number of disks on the Clariion into one big group. Let’s say that we need a 1 TB LUN. The disks we have a 200 GB in size. We would have to group together five (5) disks to get to the 1 TB size needed for the LUN. I know we haven’t taken into account for parity and what the RAW capacity of a drive is, but that is just a very basic idea of what we mean by a RAID Group. RAID Groups also allow you to configure the Clariion in a way so that you will know what LUNs, Applications, etc…live on what set of disks in the back of the Clariion. For instance, you wouldn’t want an Oracle Database LUN on the same RAID Group (Disks) as a SQL Database running on the same Clariion. This allows you to create a RAID Group of a # of disks for the Oracle Database, and another RAID Group of a different set of disks for the SQL Database.

RAID Types

Above are the supported RAID types of the Clariion.

RAID 0 – Striping Data with NO Data Protection. The Clariions Cache will write the data out to disk in blocks (chunks) that we will discuss later. For RAID 0, the Clariion writes/stripes the data across all of the disks in the RAID Group. This is fantastic for performance, but if one of the disks fail in the RAID 0 Group, then the data will be lost because there is no protection of that data (i.e. mirroring, parity).

RAID 1 – Mirroring. The Clariion will write the Data out to the first disk in the RAID Group, and write the exact data to another disk in that RAID 1 Group. This is great in terms of data protection because if you were to lose the data disk, the mirror would have the exact copy of the data disk, allowing the user to access the disk.

RAID 1_0 – Mirroring and Striping Data. This is the best of both worlds if set up properly. This type of RAID Group will allow the Clariion to stripe data and mirror the data onto other disks. However, the illustration above of RAID 1_0, is not the best way of configuring that type of RAID Group. The next slide will go into detail as to why this isn’t the best method of configuring RAID 1_0.

RAID 3 – Striping Data with a Dedicated Parity Drive. This type of RAID Group allows the Clariion to stripe data the first X number of disks in the RAID Group, and dedicate the last disk in the RAID Group for Parity of the data stripe. In the event of a single drive failure in this RAID Group, the failed disk can be rebuilt from the remaining disks in the RAID Group.

RAID 5 – Striping Data with Distributed Parity. RAID type 5 allows the Clariion to distribute the Parity information to rebuild a failed disk across the disks that make up the RAID Group. As in RAID 3, in the event of a single drive failure in this RAID Group, the failed disk can be rebuilt from the remaining disks in the RAID Group.

RAID 6 – Striping Data with Double Parity. This is new to Clariion world starting in Flare Code 26 of Navisphere. The simplest explanation of RAID 6 we can use for RAID 6 is the RAID Group uses striping, such as RAID 5, with double the parity. This allows a RAID 6 RAID Group to be able to have two drive failures in the RAID Group, while maintaining access to the LUNs.

HOT SPARE – A Dedicated Single Disk that Acts as a Failed Disk. A Hot Spare is created as a single disk RAID Group, and is bound/created as a HOT SPARE in Navisphere. The purpose of this disk is to act as the failed disk in the event of a drive failure. Once a disk is set as a HOT SPARE, it is always a HOT SPARE, even after the failed disk is replaced. In the slide above, we list the steps of a HOT SPARE taking over in the event of a disk failure in the Clariion.

1. A disk fails – a disk fails in a RAID Group somewhere in the back of the Clariion.

2. Hot Spare is Invoked – a Clariion dedicated HOT SPARE acts as the failed disk in Navisphere. It will assume the identity of the failed disk’s Bus_Enclosure_Disk Address.

3. Data is REBUILT Completely onto the Hot Spare from the other disks in the RAID Group – The Clariion begins to recalculate and rebuild the failed disk onto the Hot Spare from the other disks in the RAID Group, whether it be copying from the MIRRORed copy of the disk, or through parity and data calculations of a RAID 3 or RAID 5 Group.

4. Disk is replaced – Somewhere throughout the process, the failed drive is replaced.

5. Data is Copied back to new disk – The data is then copied back to the new disk that was replaced. This will take place automatically, and will not begin until the failed disk is completely rebuilt onto the Hot Spare.

6. Hot Spare is back to a Hot Spare – Once the data is written from the Hot Spare back to the failed disk, the Hot Spare goes back to being a Hot Spare waiting for another disk failure.

Hot Spares are going to be size and drive type specific.

Size. The Hot Spare must be at least the same size as the largest size disk in the Clariion. A Hot Spare will replace a drive that is the same size or a smaller size drive. The Clariion does not allow multiple smaller Hot Spares replace a failed disk.

Drive Type Specific. If your Clariion has a mixture of Drive Types, such as Fibre and S.ATA disks, you will need Hot Spares of those particular Drive Types. A Fibre Hot Spare will not replace a failed S.ATA disk and vice versa.

Hot Spares are not assigned to any particular RAID Group. They are used by the Clariion in the event of any failure of that Drive Type. The recommendation for Hot Spares is one (1) Hot Spare for every thirty (30) disks.

There are multiple ways to create a RAID Group. One is via the Navisphere GUI, and the other is through the Command Line Interface. In later slides we will list the commands to create a RAID Group.

The VAULT Drives

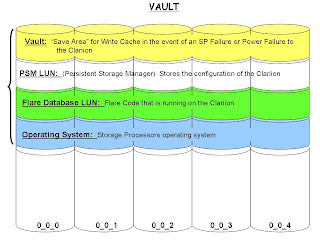

Vault Drives

All Clariions have Vault Drives. They are the first five (5) disks in all Clariions. Disks 0_0_0 through 0_0_4. The Vault drives on the Clariion are going to contain some internal information that is pre-configured before you start putting data on the Clariion. The diagram will show what information is stored on the Vault Disks.

The Vault.

The vault is a ‘save area’ across the first five disks to store write cache from the Storage Processors in the event of a Power Failure to the Clariion, or a Storage Processor Failure. The goal here is to place write cache on disk before the Clariion powers off, therefore ensuring that you don’t lose the data that was committed to the Clariion and acknowledged to the host. The Clariions have the Standby Power Supplies that will keep the Storage Processors running as well as the first enclosure of disks in the event of a power failure. If there is a Storage Processor Failure, the Clariion will go into a ‘panic’ mode and fear that it may lose the other Storage Processor. To ensure that it does not lose write cache data, the Clariion will also dump write cache to the Vault Drives.

The PSM Lun.

The Persistent Storage Manager Lun stores the configuration of the Clariion. Such as Disks, Raid Groups, Luns, Access Logix information, SnapView configuration, MirrorView and SanCopy configuration as well. When this LUN was first introduced on the Clariions back on the FC4700s, it used to appear in Navisphere under the Unowned Luns container as Lun 223-PSM Lun. Users have not been able to see it in Navisphere for awhile. However, you can grab the information of the Array’s Configuration by executing the following command.

naviseccli -h 10.127.35.42 arrayconfig -capture -output c:\arrayconfig.xml -format XML -schema clariion

Example of Information retrieved from the File:

For a Disk:

/CLAR:Disk

CLAR:Disk type="Category"

CLAR:Bus type="Property"1/CLAR:Bus

CLAR:Enclosure type="Property"0/CLAR:Enclosure

CLAR:Slot type="Property"12/CLAR:Slot

CLAR:State type="Property"3/CLAR:State

CLAR:UserCapacityInBlocks type="Property"274845/CLAR:UserCapacityInBlocks

For a LUN:

/CLAR:LUN

CLAR:LUN type="Category"

CLAR:Name type="Property"LUN 23/CLAR:Name

CLAR:WWN type="Property"60:06:01:60:06:C4:1F:00:B1:51:C4:1B:B3:A2:DC:11/CLAR:WWN

CLAR:Number type="Property"6142/CLAR:Number

CLAR:RAIDType type="Property"1/CLAR:RAIDType

CLAR:RAIDGroupID type="Property"13/CLAR:RAIDGroupID

CLAR:State type="Property"Bound/CLAR:State

CLAR:CurrentOwner type="Property"1/CLAR:CurrentOwner

CLAR:DefaultOwner type="Property”2/CLAR:DefaultOwner

CLAR:Capacity type="Property"2097152/CLAR:Capacity

Flare Database LUN.

The Flare Database LUN will contain the Flare Code that is running on the Clariion. I like to say that it is the application that runs on the Storage Processors that allows the SPs to create the Raid Groups, Bind the LUNs, setup Access Logix, SnapView, MirrorView, SanCopy, etc…

Operating System.

The Operating System of the Storage Processors is stored to the first five drives of the Clariion.

Now, please understand that this information is NOT in any way shape or form laid out this way across these disks. We are only seeing that this information is built onto these first five drives of the Clariion. This information does take up disk space as well. The amount of disk space that it takes up per drive is going to depend on what Flare Code the Clariion is running. Clariions running Flare Code 19 and lower, will lose approximately 6 GB of space per disk. Clariions running Flare Code 24 and up, will lose approximately 33 GB of space per disk. So, on a 300 GB fibre drive, the actual raw capacity of the drive is 268.4 GB. Also, you would subtract another 33 GB per disk for this Vault/PSM LUN/Flare Database LUN/Operating System Information. That would leave you with about 235 GB per disk on the first five disks.

Subscribe to:

Posts (Atom)